01

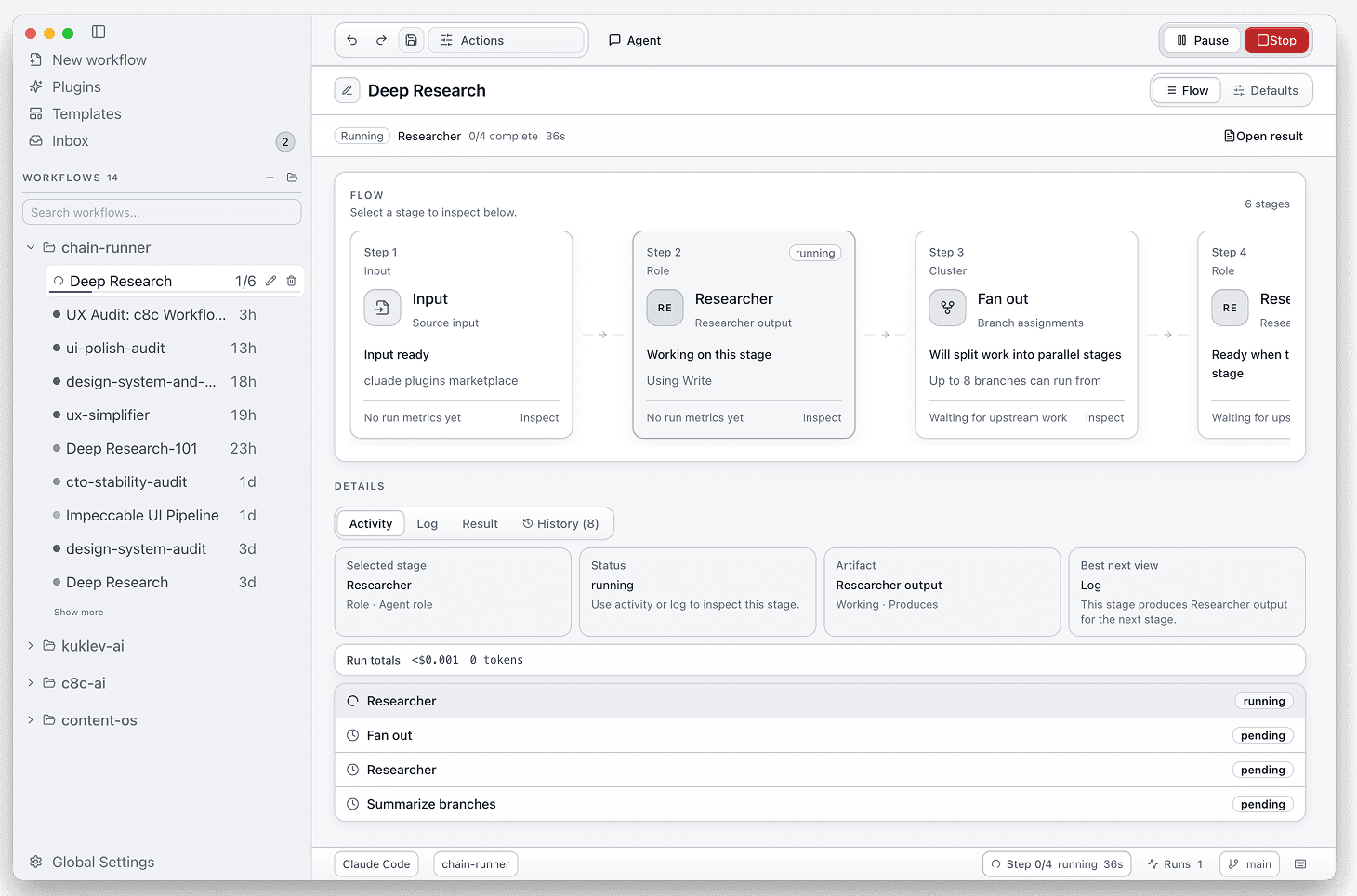

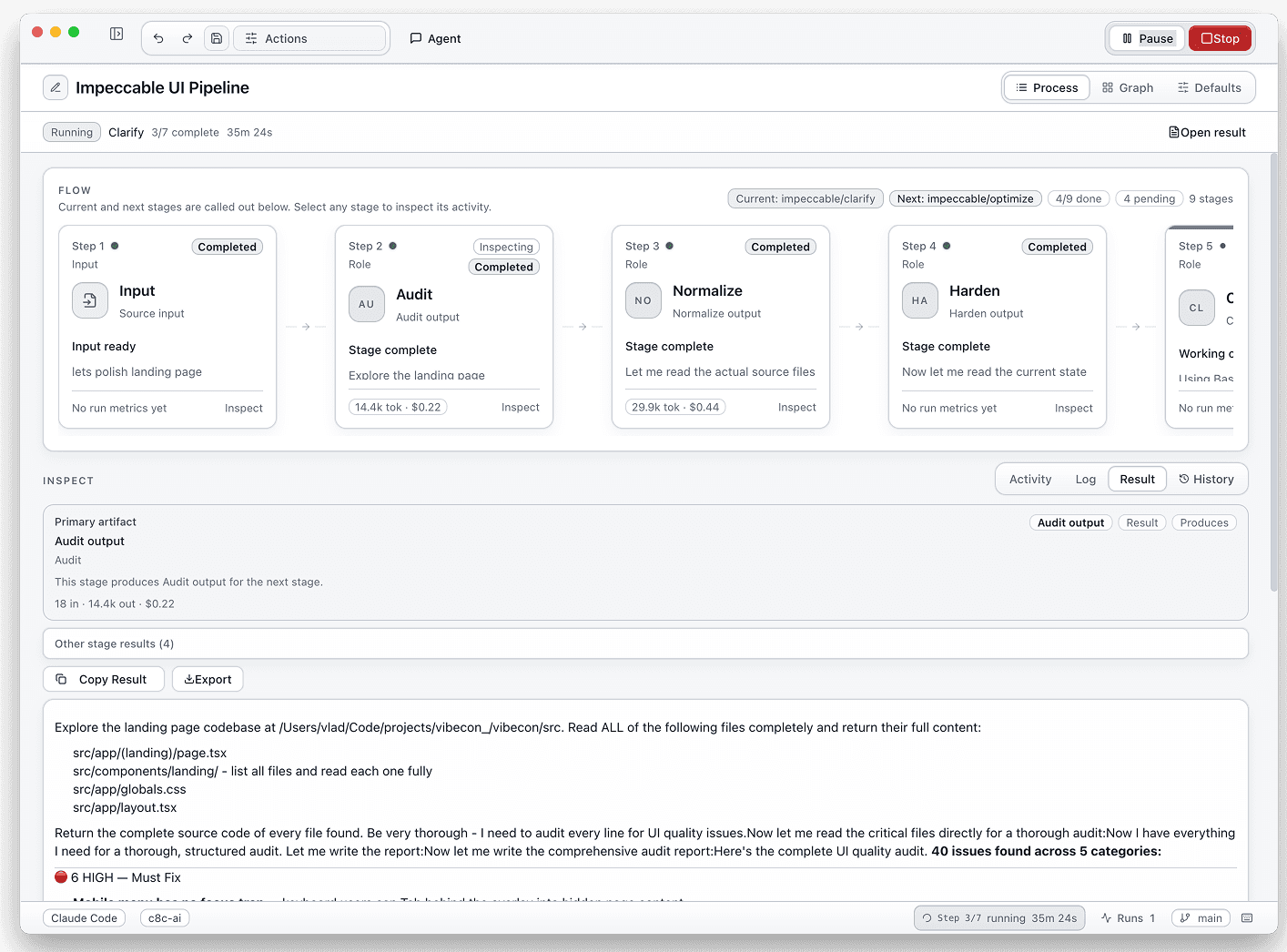

Runs your skills in sequence — stops only when it needs you.

Pick a template or describe what you want. c8c builds the flow and runs it. You come back to results, not to the next manual step.

Start from a template or generate the flow from a task description.

Stages execute in order with fresh context.

You come back to named results, not another handoff.